Generative AI is no longer a futuristic experiment. It’s already woven into daily work. Drafting texts, summarising documents, analysing information, and supporting decisions are executed with GenAI. For many employees, GenAI has quietly become part of how work gets done. And yet, an increasing number of organisations are doing the opposite of adoption. They are banning it. Often completely.

These internal GenAI bans usually come from a very understandable place: concerns about data leakage, regulatory compliance, intellectual property, reputational risk, or simply a lack of organisational readiness and AI maturity. In some cases, those concerns are more than justified. Recent incidents involving hallucinated outputs in official reports have made that painfully clear.

But, what actually happens to productivity when organisations ban GenAI?

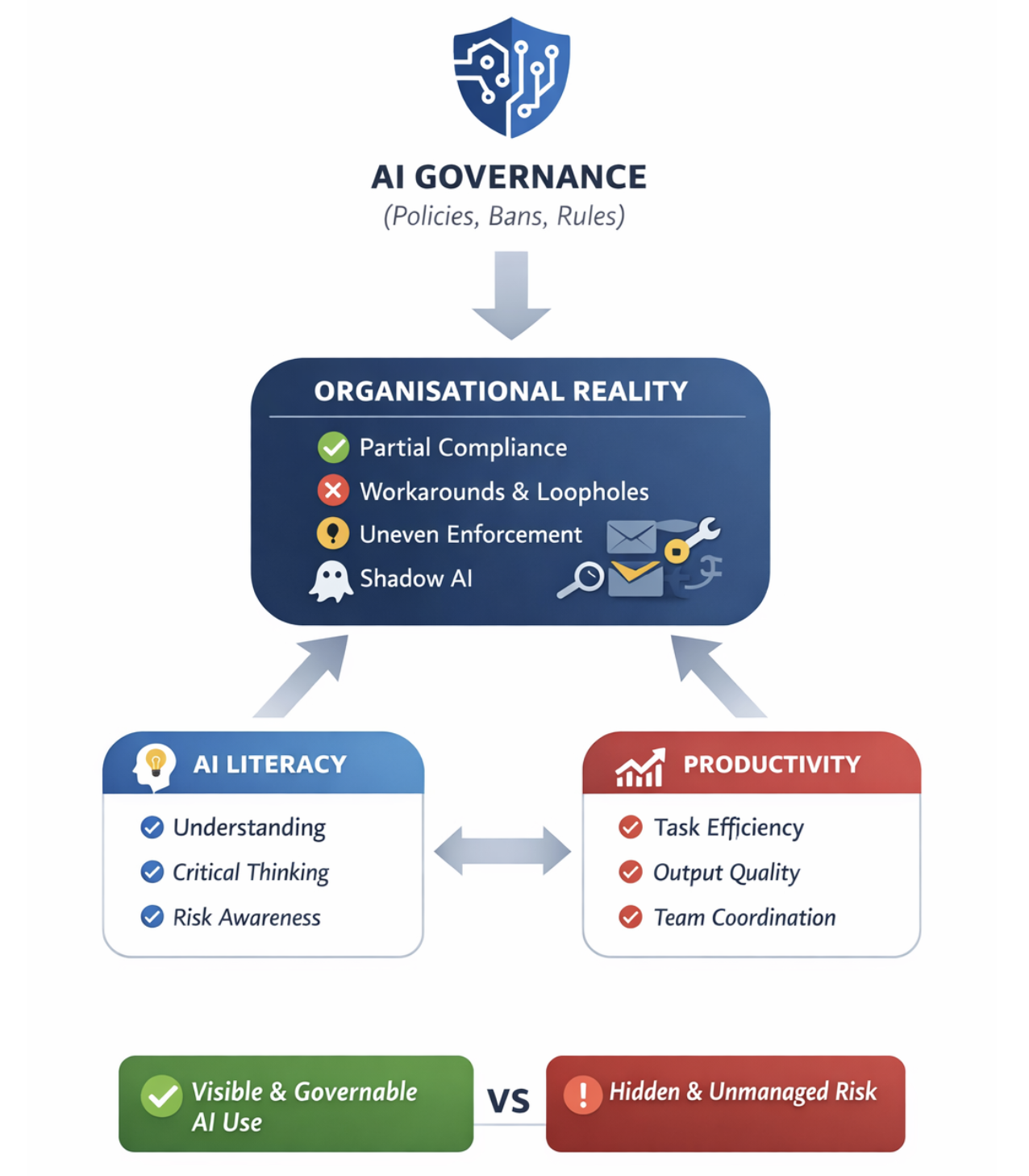

Governance Decisions Are Never Neutral

In conversations about AI Governance, bans are often discussed as necessary safeguards. Just like restructurings, policy changes, or new tooling. And organisational interventions have consequences.

We at Clever Republic work daily at the intersection of AI Governance, AI Literacy, and organisational design, and we see a recurring pattern: governance choices are often framed as purely defensive. Risk mitigation first, efficiency later: if at all. But governance decisions don’t operate in a vacuum. They shape how people work, what tools they rely on, how much discretion they have, and how much trust they feel. That means they can influence productivity in subtle but very real ways.

Why “Just Comparing Firms” Doesn’t Work

At first glance, it might seem easy to answer the productivity question. Compare firms that ban GenAI with those that don’t, and see who performs better. Unfortunately, it’s not that simple. Firms that impose GenAI bans are not random. They are often:

- More heavily regulated

- More risk-averse

- Operating with sensitive data

- Already embedded in complex compliance structures

These same characteristics also affect productivity, regardless of AI. So if we observe differences, we don’t know what caused what.

This is a classic AI Governance trap: mistaking correlation for causation.

Looking at Bans as Policy Interventions

Instead of asking “Which firms perform better?”, a more useful question is:

What changes inside a firm after a GenAI ban is introduced compared to what would have happened if it hadn’t been?

That framing treats a GenAI ban as what it really is: a policy intervention. By looking at firms before and after a ban and comparing them to similar firms that didn’t implement one, we can start isolating the effect of the governance decision itself, rather than the firm’s underlying character. This kind of thinking comes straight from causal inference, but its implications are deeply practical: it forces us to acknowledge that governance decisions have measurable organisational effects.

The Reality of Shadow AI

Next to that, there’s another uncomfortable truth organisations often underestimate. A GenAI ban does not mean GenAI disappears. Employees are creative. If they can’t use AI on their work laptop, many will use it on a personal device. Or a private account. Or through tools that aren’t monitored. This phenomenon is called ‘Shadow AI’, which means that bans frequently lead to partial compliance at best. From a governance perspective, that matters. Because what you end up measuring is:

- Formal restriction

- Informal continuation

- Uneven enforcement

- Unequal access across teams

Ironically, this can make organisations both less productive and less safe because AI use becomes invisible rather than governed.

Productivity Isn’t Just About Tools

One of the most important insights from this research perspective is that GenAI bans don’t just affect productivity by removing a tool. They also send signals. They signal how much autonomy employees have, what management prioritises, and how much trust exists between organisation and workforce. Those signals shape behaviour. In some contexts, a ban may slow work down. In others, it may push people toward workarounds. In still others, it may reduce experimentation altogether which has long-term innovation consequences that don’t show up immediately in metrics.

What This Means for AI Governance in Practice

So what should organisations do? The takeaway isn’t “never ban GenAI.” Sometimes restrictions are necessary. But blanket bans without literacy, alternatives, and governance maturity come at a cost. Some important takeaways:

- Understanding where AI adds value

- Knowing where risks actually materialise

- Equipping people to use AI responsibly

- Making usage visible rather than hidden

In other words: governance choices should be evaluated not only on compliance grounds, but also on organisational impact.

From Governance as Control to Governance as Design

At Clever Republic, we see AI Governance not as a brake, but as a design challenge. Good governance doesn’t just prevent harm, it shapes how technology fits into work in a way that is sustainable, responsible, and productive. That means asking harder questions:

- What behaviour are we actually encouraging with this policy?

- What workarounds might it create?

- What capabilities are we missing if banning feels like the only option?

GenAI is already changing how work gets done. The real question is whether organisations want that change to be visible and governable or hidden and uncontrolled.

If you want to have a chat with us about this interesting topic, reach out to us at info@cleverrepublic.com or to one of our AI colleagues.